Research

Research Projects

-

+

ACMP (Innovationsfonden)

Augmented Celluar Meat Production (ACMP): The project is focused on a collaborative robot cell concept as an alternative to the serial production line that is currently used in major slaughterhouses. With a robot cell, where a robot and an operator share tasks and workload, we get the strength of the robot and the flexibility of the operator.

The project is focused on a collaborative robot cell concept as an alternative to the serial production line that is currently used in major slaughterhouses. With a robot cell, where a robot and an operator share tasks and workload, we get the strength of the robot and the flexibility of the operator. This will help reduce the repetitive stress injuries that plague workers in the industry and it will ease the transition to fully automated robots that can perform the entire process by themselves. The project requires innovation in the way humans and robots collaborate and the development of robots that can adapt to the high variation in both products and the interaction with humans.

We are focusing on safe and efficient communication for collaboration between operator and robot, aided by AR technologies, as a prerequisite to establishing and maintaining trust between operator and robot. There are two stages to developing safe HRC in the workplace. The first stage is developing the interaction methods facilitating the collaboration itself. This involves development and evaluation of AR interfaces for the system to inform the operator on current task objectives and the expected movements from the robot. It also involves being able to track the operator’s motions in order to interpret their their level of trust so the system can adapt its motion patterns accordingly. The second stage is developing methods measuring the operator’s level of trust towards the collaborative robot while working in close proximity to it. Because the robot will be equipped with either sharp tools or powerful gripping tools, there is a high risk that the operator will feel unsafe around it, which will likely harm efficiency in high-intensity production lines. The goal is to develop methods for the system to interpret the operator’s trust towards the robot based on their movement correlated with the robot’s actions and the current task. We will use this to have to robot adapt its movement patterns and communication according to the comfort of the operator to maintain their trust

throughout the collaboration.Contact: Matthias Rehm

-

+

BYOR (Helsefonden, Spar Nord Fonden)

Build your own robot (BYOR) for independent living is an exploratory project with the overall goal of developing a concept of social robots as do-it-yourself aid that can be broadly used by different groups of people with cognitive and physical impairments.

Build your own robot for independent living is an exploratory project with the overall goal of developing a concept of social robots as do-it-yourself aid that can be broadly used by different groups of people with cognitive and physical impairments. Based on empirical data from a residential home for people suffering from severe impairments due to acquired brain injury, the project focuses on developing robots for daily cognitive guiding and reminding tasks. The vision for the project is to create a toolbox for developing individualized solutions that match the need of each specific citizen. By creating the po

ssibility for experimenting with the task of building real and functional robots, we aim at increasing citizens’ independence and quality of life, while at the same time strengthening social competences and supporting the feeling of being in control over one’s own life. The project thus has two layers: (i) Co-creation of individualized social robots, and (ii) evaluation of the robot in use.

Contact: Matthias Rehm

This project has been partly funded by Helsefonden and Spar Nord Fonden.

-

+

Brain-controlled exoskeletons for stroke rehab (Velux Fonden)

Technology transfer from prototype to home use

A new promising rehabilitation tool is a brain-controlled exoskeleton (also known as a brain-computer interface) that can help the patient execute the intended movement of the affected limb. This rehabilitation tool is still in the development/evaluation phase. Thus, there is very limited knowledge about how this tool must be designed and implemented, so the patient wants to use it and it is possible to move it out into the rehabilitation clinic or the home of the patient. This will be investigated in a 3-year project supported by VELUX FONDEN.

The primary investigator is Mads Jochumsen with a collaborative research team consisting of Birthe Dinesen, Hendrik Knoche (Department of Architecture, Design and Media Technology), Troels W. Kjær (Roskilde Hospital, Copenhagen University), and Preben Kidmose (Århus University).

Project website: https://www.labwelfaretech.com/projects/bci-exoskeleton/

Contact: Hendrik Knoche

-

+

drapebot

The DrapeBot project aims at human-robot collaborative draping. The robot is supposed to assist during the transport of the large material patches and to drape in areas of low curvature.

The DrapeBot project aims at human-robot collaborative draping. The robot is supposed to assist during the transport of the large material patches and to drape in areas of low curvature. The role of the human is to drape regions of high curvature. To enable an efficient collaboration, DrapeBot develops a gripper system with integrated instrumentation, low-level control structures, and AI-driven human perception and task planning models. All of these developments aim at a smooth and efficient interaction between the human and the robot. Specific emphasis is put on trust and usability, which will be the main contribution of the HRI lab to the project. The DrapeBot project runs over a period of four years from January 2021 to December 2024.

Project website: Drapebot.eu

Contact: Matthias Rehm

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 101006732.

-

+

EXOTIC (AAU)

EXOTIC - Assistive Personal Robotics Platform With An Exoskeleton Using The Tongue For Intelligent Control As A Use Case

The Exotic project is an interdisciplinary effort that aims at providing tetraplegics a way to act on their environment - an exo-skeleton arm controlled through an intra-oral interface.

Project website: https://www.strategi.aau.dk/Forskning/Tv%c3%a6rvidenskabelige+forskningsprojekter/EXOTIC/

Contact: Hendrik Knoche

-

+

Robot Digital Signage (Innovationsfonden)

This project seeks to revolutionize the market for digital signage by developing a high-quality robot platform featuring state-of-the-art robot navigation algorithms that are easy to use for app programmers everywhere.

The use of robots in digital signage is on the verge of a major breakthrough, as robots can move physically and thereby improve communication and interaction significantly compared to stationary digital terminals. However, existing robot platforms are still difficult for most programmers to use, not suited for human-robot interaction or simply too expensive. This project will revolutionize the market for digital signage by developing a high-quality robot platform featuring state-of-the-art robot navigation algorithms that are easy to use for app programmers everywhere. This will make it fast for non-robot developers to create and maintain new robot applications, which can be used for interactive digital signage, as a tool for wayfinding, information, entertainment and advertising within retail, transport and healthcare. The robot will tap into the market for service robots which is expected to reach USD 23.90 Billion by 2022, at an annual growth rate of 15.18%.

Project websites: https://polaris-robot.dk, https://vbn.aau.dk/da/projects/robot-digital-signage

Contact: Karl Damkjær Hansen

Ph.D. Projects

-

+

EXPLORING HUMAN-ROBOT COLLABORATION IN THE WILD: a techno-anthropological investigation of hospital staff and mobile robots collaborating

The purpose of this PhD-project is to research how the everyday human-robot collaboration unfolds (and what issues arise) in settings where robots are no longer under surveillance in synthetic laboratory settings but are deployed as part of the service staff, in hospitals.

The working relationship between humans and robots, human-robot collaboration (HRC), is a result of new applications for robots, they are no longer heavy-weighted technologies isolated in cages in laboratory or industry settings, but now also part of other contexts than the ones the robots are developed in.The robots now functions in more complex settings, alongside humans: the robots are being deployed ‘in the wild’.

This phd project is concerned with human-robot collaboration in one of these wild settings, being danish hospitals, where robots have been deployed to reduce clinical overload and positively affect the well-being and health of clinicians, by assisting in the transportation tasks, which represents a great part of the everyday routines in the hospitals.

But the collaboration can be challenging for both human and robot, since the hospital staff (who may have concerns about the robot and the difficulties it might create when integrated into flexible hospital environments) must adapt to collaborating with the robots – while the robot must adapt to the environment it is in, when it is no longer situated in highly controlled environmentsWhen humans and robots are collaborating in the wild environment, unexpected siutations and issues occurs and it is these, present phd project is concerne with and investigates through ethnographic methods.

Contact: Kristina Tornbjerg Eriksen

-

+

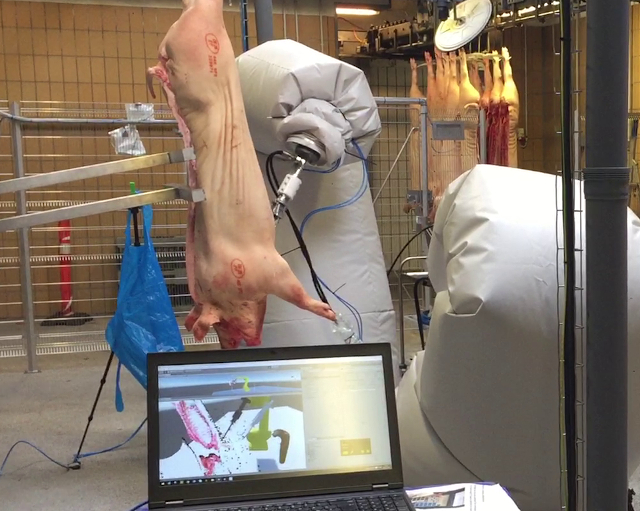

Adaptive and Self-learning Slaughterhouse Robots (Ph.D. Project)

The PhD project is concerned with building vision-based robot controllers that are capable of intelligently adapting to the biological objects found in a industrial settings.

The PhD project is concerned with building vision-based robot controllers that are capable of intelligently adapting to the biological objects found in industrial settings. This includes research in the application of learning algorithms and the role of humans in training robots.

Contact: Mark Philip Philipsen

-

+

Beyond one-to-one human robot interaction (Ph.D. Project)

This PhD works with beyond one-to-one human-robot interaction and more specifically how to design interaction.

This PhD works with beyond one-to-one human-robot interaction and more specifically how to design interaction. Within the field of HRI, a clear tendency away from the dyadic interaction towards the study of beyond one-to-one interaction can be observed. The shift towards more complex systems, involving multiple human and robotic actors will be the primary focus of this Ph.d. project. The initial step will involve the identification of challenges, opportunities, past trends, as well as future opportunities within the beyond one-to-one interaction context. Furthermore, the focus will be on the study of the interaction of robotic systems, how groups of people interact with robots, as well as mixed groups of robots and humans in settings such as collaborative work or tutoring context.

Contact: Eike Schneiders

-

+

Close-Proximity Human-Robot Collaboration (Ph.D. Project)

Introducing human-robot collaboration (HRC) and augmented reality (AR) to a workplace can assist in strenuous physical tasks and present task-relevant data in seamless and non-obstructive ways, improving work flow and welfare.

Introducing human-robot collaboration (HRC) and augmented reality (AR) to a workplace can assist in strenuous physical tasks and present task-relevant data in seamless and non-obstructive ways, improving work flow and welfare. The PhD is done in the context of the project, Augmented Cellular Meat Production (ACMP), is to enhance the production lines using robotics and AR displays where the robots will serve a collaborative role in close proximity to human operators.

Contact: Kasper Hald

-

+

Creative Design Processes through Interactive Robotics (Ph.D. Project)

The PhD study investigates design methods and procedures for establishing a direct relation between creative design processes and interactive robotic fabrication.

The PhD study investigates design methods and procedures for establishing a direct relation between creative design processes in the field of architecture and interactive robotic fabrication, with emphasis on how the cognitive design processes are influenced by interactive real-time human-material-robot processes.

Contact: Mads Brath Jensen

-

+

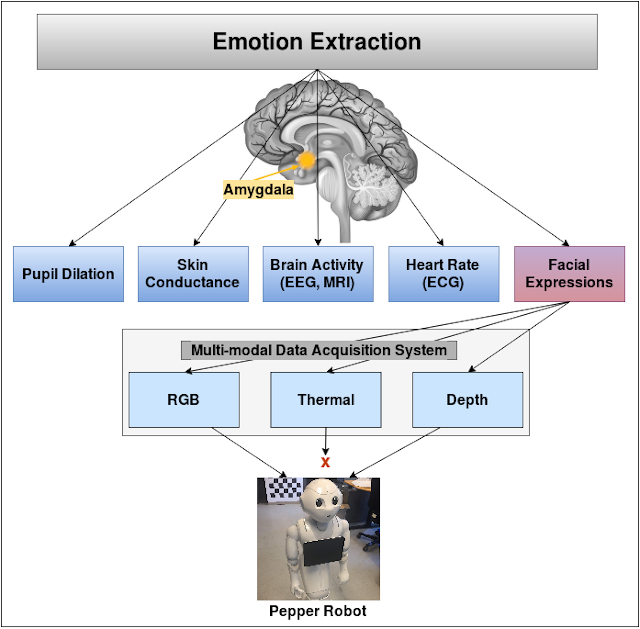

Effective Human-Robot Interaction and Monitoring for TBI Patients

This project focuses on the extraction of social signals through facial expressions and use the emotional cues for productive human-robot interaction with people with traumatic brain injury.

It is always challenging for people with motor, hearing, and speech inhibitions to communicate and socialize. This project explores effective strategies to improve the quality of life of the residents at a Danish neurocenter. To this end, we have been working on the extraction of social signals through facial expressions and use the emotional cues for productive human-robot interaction. This project has three phases:

- Development of the multi-modal database of real patients in specific scenarios

- Use of deep transfer learning approaches to make a unique and customized facial expressions recognition (FER) system

- Deployment of this FER system through Pepper robot to enhance Human-Robot interaction and social interaction.

The Pepper robot performs the role of assistive technology for both residents and staff members. In the case of residents, it provides stimulus to engage more socially through its speech and visual input by analyzing mood in particular therapy sessions. It acts as a monitoring and feedback tool for staff members allowing to monitor emitonal reactions over therapy sessions and allow for adaptation of exercises based on this addtional input

Contact: Chaudhary Muhammad Aqdus Ilyas

-

+

Proactive Human Robot Interaction (Ph.D. Project)

Proactive HRI is concerned with endowing robots with proactive human behavioural traits or capabilities mainly with the purpose of increasing intuitivenes and efficiency in the human-robot interaction.

This PhD within the field of Proactive Human-Robot Interaction is concerned with investigating and mapping the relative young subfield of Human-Robot Interaction as well as making advancements to a selection of methods and technologies applied within the field. Proactive HRI is concerned with endowing robots with proactive human behavioural traits or capabilities mainly with the purpose of increasing intuitivenes and efficiency in the human-robot interaction. Anticipatory behaviour, and in relation to this, intend estimation, confusion or ambiguity resolution, contextual awareness and task selection and planning are some of the subjects studied in this discipline; furthermore, methods for enabling robots to autonomously initiate interaction is a studied area within this field.

Contact: Marike Koch van den Broek

-

+

Robot Planning and Human Interaction (Ph.D. Project)

The goal of this Ph.D. study is to investigate how an adaptive, generalizable and robust mind can be developed for mobile robots.

The goal of this Ph.D. study is to investigate how an adaptive, generalizable and robust mind can be developed for mobile robots, constituting a general framework softening the gaps between the extremely specialized algorithms developed in the traditional core research areas of robotics. This is done with a special emphasis on developing the cognitive mechanisms needed to form an artificial theory of mind - an important prerequisite for natural interaction with humans.

Contact: Malte Rørmose Damgaard

-

+

Semi-autonomous Control of an Assistive Robotic Manipulator

Computer vision is applied in the context of an assistive robotic manipulator with the purpose of making it easier for disabled persons to complete common everyday tasks.

Computer vision is applied in the context of an assistive robotic manipulator with the purpose of making it easier for disabled persons to complete common everyday tasks. The computer vision is used to capture and interpret the immediate environment of the user in order to predict the intention of the user. The manipulator will hence be controlled in a semi-autonomous manner where the system will provide assistance based on the predicted intention.

Contact: Stefan Hein Bengtson